We dug into our own data to find out how the biggest companies in the world are using Google Cloud in production. We also asked a few cloud engineers from these companies to share any interesting use cases/initiatives. Here are real-world examples of how they use it.

Audio Streaming — Stockholm, Sweden

Pub/Sub

GKE

Dataflow

BigQuery

Bigtable

Spotify is the world's most popular audio streaming platform, with over 700 million monthly active users and 290 million Premium subscribers across 180+ markets.

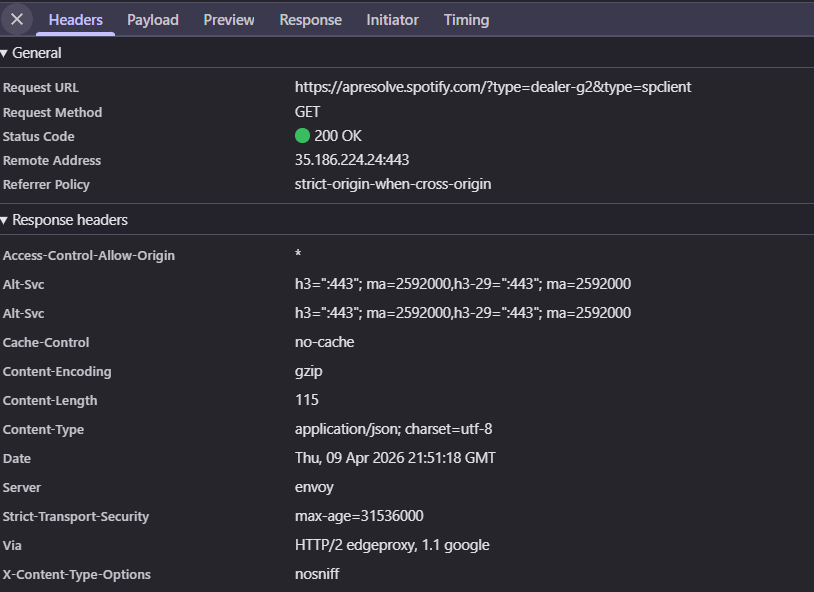

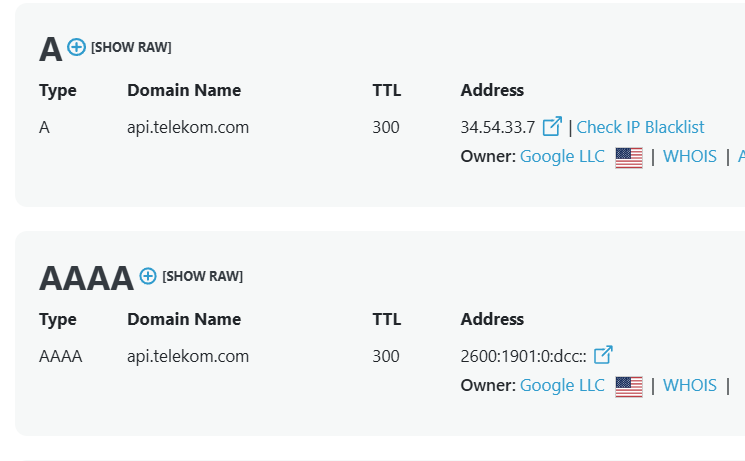

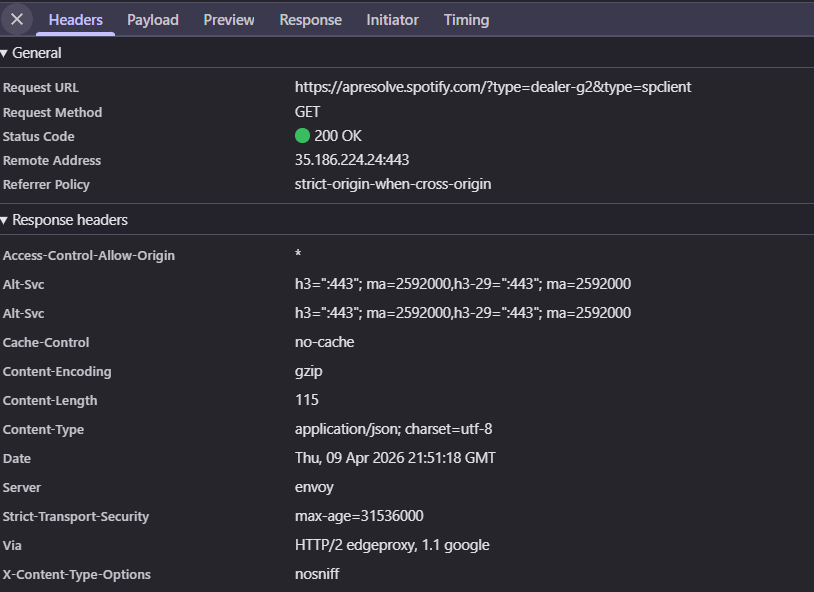

Google Cloud isn't just part of Spotify's infrastructure, it's essentially all of it. API calls from open.spotify.com and apresolve.spotify.com both return via: 1.1 google headers, which confirms that core platform traffic routes through Google's network.

Two systems stand out as the load-bearing pieces of the business: the data pipeline that captures what users are doing, and the commerce stack that turns free listeners into paying subscribers.

The first is the event delivery pipeline. Every play, skip, save, and search a user makes generates an event, and Spotify processes over a trillion of these events per day. The pipeline that handles them is built entirely on GCP, with Pub/Sub absorbing the firehose of incoming events, services on GKE validating and enriching them, and Dataflow handling the heavier aggregations that feed downstream systems. Spotify relies on this pipeline for almost everything that touches data, including recommendations, royalty calculations to artists, and financial reporting, which means reliability and speed are non-negotiable.

The second is the commerce stack, which is how Spotify actually makes money. The Subscriptions organization runs all of it on GCP, including merchandising, upsell journeys, billing, and payments for those 290 million Premium subscribers. The backend is built primarily in Java, with BigQuery powering the analytics that drive conversion experiments and Bigtable serving the operational data the services depend on at runtime. Payments and billing sit on top of that layer, with PostgreSQL holding transactional state and a payments SDK processing pay-in flows across global markets.

Put those two systems together and you get the shape of Spotify's relationship with GCP. The pipeline that captures user behavior and the stack that monetizes it are both running on the same cloud, built by teams using the same tools.

Internet & Media · Sunnyvale, California

BigQuery

Dataflow

Dataproc

Composer

Pub/Sub

Looker

Vertex AI

Yahoo operates a portfolio of consumer products including Yahoo Mail, Yahoo Finance, Yahoo Sports, Yahoo News, and AOL, reaching roughly 900 million monthly active users globally.

Yahoo is currently moving its entire Mail infrastructure to Google Cloud. It's a 100% move to native public cloud, with petabytes of data and 220 million monthly active users going along with it. Mail handles billions of inbound connections per day and trillions of messages, so this is a serious undertaking. It's big enough that Yahoo has a dedicated finance lead working with the CTO's team on cloud spend and the GCP migration as a company-wide project.

The Mail Analytics Infrastructure team is building the data side on GCP. BigQuery is the data warehouse. Composer, which is Google's managed Airflow, orchestrates the batch pipelines. Dataflow handles stream processing, Dataproc runs the Spark workloads, and Pub/Sub handles event streaming between services. Finally, Looker and Looker Studio sit on top for analytics.

Sitting on top of that data foundation is the Mail Intelligence team, which handles the ML side. They build personalization features that work across billions of mail messages, using NLP, generative AI, and traditional ML. Training and serving run on Vertex AI, Google's managed ML platform, alongside Hugging Face, PyTorch, and TensorFlow on the modeling side. The team also uses LangChain, LangGraph, and Google's Agent Development Kit to build agentic features on top of all of it.

For a company that ran its own infrastructure for most of its history, including some of the original work on Hadoop and distributed systems, moving to GCP-native is a real shift. The bet is that managed services, faster ML iteration, and predictable cloud costs are worth it. The work is happening now, across the products that several hundred million people use every day.

Retail & Restaurant Technology — Atlanta, Georgia

GKE

Apigee

Anthos Service Mesh

Kubernetes

NCR Voyix is a global provider of digital commerce technology for retailers and restaurants, powering point-of-sale systems, ecommerce platforms, and store infrastructure for some of the world's largest retail chains.

Two systems make up the bulk of what NCR Voyix actually sells: the Voyix Commerce Platform that runs in the cloud, and NCR Edge that runs inside physical stores. Both are built on GCP, and the interesting part is how they're designed to work together.

The first is the Voyix Commerce Platform, which is the cloud backbone behind millions of retail and restaurant transactions globally. It's an API-first system handling everything from product catalogs to sales transactions to mobile services for major retailers across the US, Europe, the UK, Asia, and Australia. The architecture runs on GKE for the underlying container platform, Apigee for managing the APIs that retailers and partners build against, and Anthos Service Mesh for handling secure communication between services. Azure shows up as a secondary cloud, but GCP is the primary one.

The second is NCR Edge, which solves a problem most cloud companies don't have to think about. Retail stores need their point-of-sale systems to keep working even when the internet drops, so the software has to run locally on store hardware while still syncing with the cloud when it can. NCR Edge handles that hybrid setup, with Kubernetes running at the store level and GCP as the cloud layer the stores sync back to. A dedicated team called the Foundation squad owns the entire build and release lifecycle for the platform, from CI/CD pipelines to the Bazel build system that compiles it all.

The GCP relationship also extends into how NCR Voyix goes to market. Sales engineers covering retail and federal government accounts are expected to hold Google Cloud certifications, which signals the sales motion is built around GCP capabilities rather than treating it as one of several interchangeable clouds.

Enterprise Technology — Lincolnshire, Illinois

GKE

Pub/Sub

Cloud Functions

Firebase

BigQuery

Vertex AI

Zebra Technologies makes the hardware and software that powers frontline operations globally, including enterprise Android devices, barcode scanners, and intelligent platform software used across retail, logistics, healthcare, and manufacturing.

Most cloud companies don't have to think about physical hardware sitting in warehouses, hospitals, and store backrooms. Zebra does. They sell millions of enterprise Android devices to customers who need to provision them, push policies to them, update them, and pull telemetry from them at global scale. The system that handles all of that is Zebra's Cloud Device Management platform, and it runs on GCP.

The platform exists to solve a specific problem: when a logistics company buys 50,000 Zebra scanners and deploys them across 200 warehouses, someone has to register every device, enforce security policies, push over-the-air updates, monitor health, and respond when something breaks. Doing that manually is impossible at that scale, so it has to happen through the cloud.

Zebra's Cloud Device Management platform is built as a set of cloud microservices that integrate with Android Enterprise APIs to handle the actual device control, including provisioning, policy enforcement through Android's DevicePolicyManager, and orchestration of remote commands. The underlying services run on GKE, which is Google's managed Kubernetes for running containerized applications at scale. Pub/Sub handles the event-driven messaging pipelines that ingest telemetry from devices and distribute policies back out. Cloud Functions handles the smaller serverless workloads that fire on specific events. And Firebase backs the parts that need real-time sync with devices in the field. The whole thing is built for multi-tenant deployments, since different customers need their fleets isolated from each other.

Zebra's other major products run on the same cloud. Their Workcloud Sync product, which provides real-time collaboration tools for frontline workers, is built on GCP using Go, Java Spring Boot, AlloyDB, and Vertex AI. Their VisibilityIQ supply chain visibility platform relies on BigQuery, Dataflow, Firebase, and Looker for analytics. Across all of these products, GCP is Zebra's default cloud for building production infrastructure.

Put it all together and Zebra's relationship with GCP comes down to one thing: managing the gap between the cloud and the physical world. Their devices live in warehouses, hospitals, and stores, but the systems that provision them, secure them, update them, and analyze what they're doing all live in Google Cloud.

Enterprise Technology · Cambridge, Massachusetts

Apigee

Chronicle

Security Command Center

Pegasystems builds enterprise AI decisioning and workflow automation software, serving global clients across financial services, healthcare, government, and telecommunications.

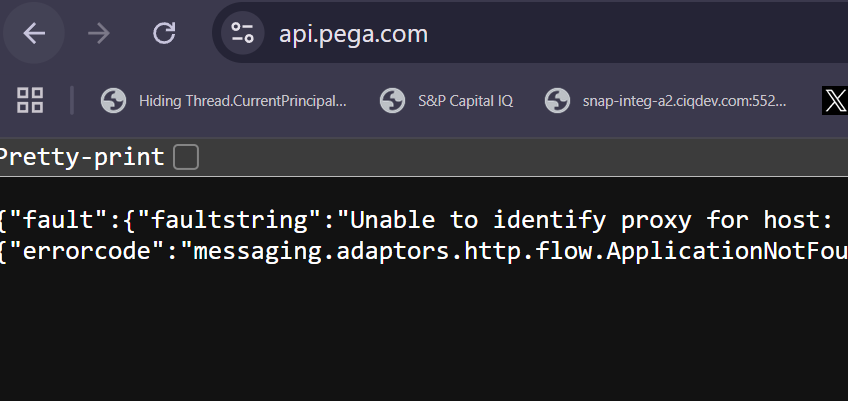

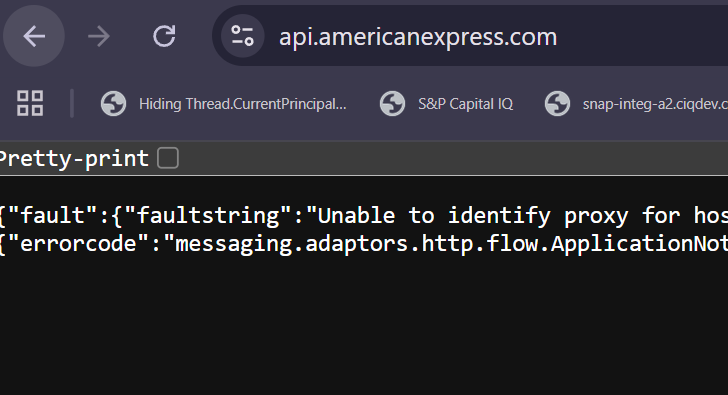

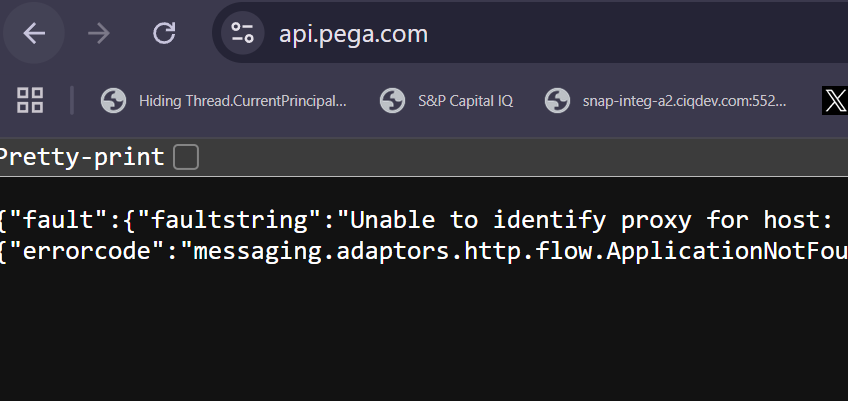

Pega's public API runs on Google Cloud. A request to api.pega.com hits Apigee, Google's API management platform, which handles routing, authentication, and traffic policies before passing the call through to the right backend service. That endpoint is how Pega's customers integrate their own systems with the platform, creating cases, taking actions on assignments, fetching workflow data, all of it programmatically. For a SaaS company whose product is built around exposing process automation as a service, the API gateway is effectively the front door, and Apigee is what's standing at it.

A request to api.pega.com returns an Apigee fault message, confirming Pega's API edge runs on Google's API management platform.

Pega also runs its security operations on Google Cloud. The Cloud Security Operations Center, the team that monitors and defends Pega Cloud, uses Google Chronicle (now marketed as Google SecOps) as its SIEM. Chronicle ingests logs from across the environment, correlates events, and surfaces the patterns that indicate a real threat. Pega's CSOC team writes detection rules in Chronicle's YARA-L language and builds the automated response playbooks that analysts use when alerts fire.

The logs feeding Chronicle come from across the stack: Cloud Audit, Security Command Center, VPC Flow, WAF, plus GuardDuty findings from the AWS side of the platform. Detections are mapped against the MITRE ATT&CK cloud matrix to make sure coverage matches the threats that actually target cloud infrastructure.

Pega's relationship with Google Cloud is narrower than some of the companies in this article, but it's pointed at two of the harder problems in enterprise SaaS: giving customers a reliable API to build against, and keeping a multi-tenant cloud platform secure at scale. Apigee and Chronicle are the tools they reached for to do them.

Healthcare · Rochester, Minnesota

GKE

Cloud Run

Cloud SQL

Apigee

Vertex AI

Mayo Clinic is the largest integrated nonprofit medical group practice in the world, with major campuses in Rochester, Phoenix/Scottsdale, and Jacksonville, and roughly 48,000 employees.

Mayo is building a healthcare-native cloud platform on Google Cloud. The effort is called Mayo Clinic Platform, and the goal is to make it possible to securely exchange healthcare data between systems and applications, with the kind of interoperability that the industry has been promising for decades but rarely delivered. The reason this is hard is that healthcare data lives inside a thicket of legacy standards and strict regulations, and most cloud-native architectures weren't designed with any of that in mind. Mayo's bet is that GCP is the right foundation to build it on anyway.

The platform is built on the standard GCP toolkit. GKE runs the containerized workloads. Cloud Run handles the serverless side. Cloud SQL backs the relational data. Apigee sits at the edge as the API gateway, which matters a lot in healthcare because every external integration with another hospital system, payer, or research partner has to be mediated, authenticated, and logged. Identity-Aware Proxy, VPC Service Controls, and Cloud Armor enforce the security posture that the regulatory environment requires.

Sitting alongside that is Mayo's AI Factory, a separate GCP-based platform for building and deploying AI agents in clinical and research settings. The Research Shield team uses Apigee for the API surface, Vertex AI for model training and serving, and Google's Agent Development Kit to build agentic workflows that can operate over Mayo's data with the right guardrails in place. The work is specifically about making generative AI safe and useful in a regulated healthcare environment, which is its own kind of engineering problem.

What makes Mayo's GCP usage distinctive is the constraint set. Most cloud-native companies are building for scale and speed. Mayo is building for scale, speed, and the requirement that every data flow be auditable, every API call be logged, and every AI output be safe enough to put in front of a clinician. The fact that they've chosen GCP as the platform to do all of that, and built two separate but coordinated platforms on top of it, says something about where they think healthcare cloud infrastructure is heading.

Healthcare · Dublin, Ohio

GKE

Cloud Functions

Apigee

BigQuery

Dataflow

Pub/Sub

Looker

Vertex AI

Cardinal Health is one of the largest healthcare companies in the United States, distributing pharmaceuticals and medical products to hospitals, pharmacies, and other providers across the country.

Google Cloud is Cardinal Health's preferred cloud platform across virtually every part of their technology stack.

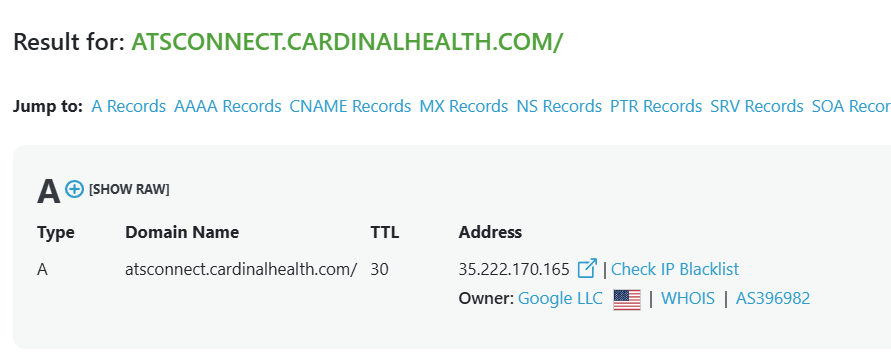

One clear example is their customer-facing ordering platforms. The main B2B ecommerce platform, which handles medical product orders for hospital systems and healthcare providers, runs on GCP with Google Cloud Functions, GKE, and Apigee at the core alongside Java Spring Boot. Advanced Therapy Connect, a portal Cardinal Health launched in early 2025 to unify cell and gene therapy ordering, runs on Google Cloud too, as you can see from the DNS lookup above.

Underneath all of it sits a data platform built entirely on GCP. Multiple data engineering teams build production pipelines using BigQuery, Cloud Dataflow, Cloud Pub/Sub, and Airflow, feeding analytics and visualization layers through AtScale and Looker.

The most forward-looking investment is their AI Center of Excellence, which they call augIntel. The team builds Cardinal Health's enterprise AI platform on Vertex AI, with Gemini Enterprise paired with embedding models and vector databases to power RAG pipelines that let LLMs answer questions over internal data. They use this stack to build forecasting and risk prediction models, classification systems for healthcare data, and AI agents, built with Google's Agent Development Kit, for use cases across the company.

Kidney Care · Denver, Colorado

Cloud Spanner

BigQuery

Vertex AI

GKE

Apigee

DaVita is one of the largest kidney care providers in the United States, operating more than 2,700 dialysis centers and treating over 200,000 patients nationwide.

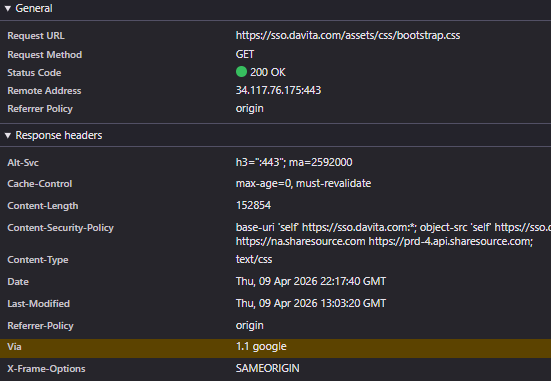

GCP is the foundation of DaVita's most important clinical system. DaVita built their Center Without Walls platform (CWOW) on Google Cloud over five years, choosing it as the underlying infrastructure for a complete overhaul of how kidney care is delivered. The platform replaced a decentralized, fax-based documentation system with a unified clinical operating system now live across all of their dialysis centers.

The architecture is built around three GCP services: Cloud Spanner as the system of record for electronic health records, BigQuery for analytics, and Vertex AI for machine learning. Spanner handles the write volume from 30 million dialysis treatments a year, and Spanner change streams pipe data into BigQuery in about 15 seconds, down from 60. That speed is what lets a physician see fresh patient labs and medication data within seconds of a treatment, across all 200,000 patients and 45,000 clinicians on the platform.

The most interesting part is what's happening on top of CWOW. DaVita's AI team deploys machine learning models on Vertex AI directly into the CWOW workflow, so when a clinician opens a patient's chart, the system can flag hospitalization risk, predict treatment complications, or surface the next-best clinical action in real time. The team is now extending the same stack into agentic AI, using Google's Agent Development Kit, Vertex AI Agent Builder, and Apigee as the secure inference gateway between agents and enterprise data. The goal is a layer of AI assistance behind every patient interaction, running on the same GCP infrastructure as the rest of the clinical system.

Healthcare · St. Louis, Missouri

Vertex AI

BigQuery

GKE

Cloud Run

Cloud SQL

Pub/Sub

Ascension is one of the largest nonprofit Catholic health systems in the United States, operating 95 hospitals and a network of care sites across 16 states with over 99,000 associates.

Ascension's most ambitious bet on Google Cloud is a dedicated AI organization called the Ascension Data Science Institute, or ADSI. It was set up in 2019 with an explicit mandate: use advanced analytics and machine learning to improve clinical care and patient safety across the entire health system. The Data Science Manager role leading this work is hiring for "advanced AI solutions that directly improve patient safety and clinical quality," and the team is building those solutions on GCP and Vertex AI.

ADSI's clinical AI team builds predictive machine learning models on structured electronic health record, billing, and claims data, and runs LLM workflows on Vertex AI to extract insights from unstructured clinical notes. The same Vertex AI stack powers Generative AI products for clinicians, with explicit attention to fairness and bias because the outputs feed into bedside care decisions. BigQuery is the analytics warehouse underneath all of it, joining clinical EHR data with revenue cycle, registry, and pharmacy data to give the data science team a unified view across the system.

GCP isn't just where ADSI runs. It's also where Ascension's patient-facing applications live. The Growth Marketing and Digital Experience team builds the healthcare applications that patients and clinicians interact with on GCP using Java Spring Boot microservices, GKE, Cloud Run, Cloud SQL, and Pub/Sub. That gives Ascension a coherent path from the data warehouse to the AI models to the applications where the AI assistance actually shows up in front of clinicians.

Healthcare · Woonsocket, RI · BigQuery, GKE, Dataflow, Dataproc, Vertex AI

BigQuery

GKE

Dataflow

Dataproc

Vertex AI

CVS runs the kind of healthcare data problem that BigQuery was built for. Every pharmacy claim, every Aetna member record, every Caremark formulary decision, every Medicare Stars quality measure flows through pipelines that need to be queryable at scale and defensible under audit. That's why CVS standardized on Google Cloud as the destination for its biggest data workloads and built the organization around it.

The work spans the company. Caremark's PBM analytics teams lean on BigQuery to make sense of pharmacy claims, rebates, and utilization patterns that drive billions in spend. Feeding into that, the Eligibility team writes Python and Spark jobs on Dataproc to keep member data flowing between systems. Over on the Aetna side, Medicare Stars data scientists train and deploy models on BigQuery, Dataflow, and Dataproc to find the interventions that move quality scores. The same data infrastructure backs CVS Pharmacy's shipment tracking pipelines. Different business units, one backbone.

Holding the whole thing together is an Enterprise Cloud Tool Enablement and Strategy team that exists for one reason: getting CVS's analytics and BI tools onto Google Cloud cleanly, with the right networking, IAM, and BigQuery data governance to satisfy a HIPAA-regulated environment. Most companies treat cloud as infrastructure. CVS treats GCP as a platform worth staffing a dedicated organization to defend.

Financial Services · New York, NY · BigQuery, GKE, Apigee, Cloud Composer, Dataproc, Vertex AI

BigQuery

Apigee

GKE

Cloud Composer

Dataproc

Vertex AI

Amex is running one of the most ambitious GCP migrations in financial services. The company is consolidating its data, its AI workloads, and its partner-facing APIs onto a single Google Cloud foundation, and everything new gets built there.

The center of it is LUMI, Amex's Google Cloud platform for credit reserving. LUMI runs CECL and IFRS9 reporting and decides how much money the company sets aside against future losses, which is about as core as a financial workload gets. Global Servicing powers its contact center AI on BigQuery and Vertex AI. Global Loyalty is moving its big data stack off on-prem and into BigQuery to join it. The data and AI work that defines how Amex operates is converging on Google Cloud.

The same goes for everything outside the company. Amex runs api.americanexpress.com on Apigee, and that's the single gateway every merchant, fintech, and aggregator hits when they integrate with Amex, from fraud checks to B2B payments to the new Agentic Commerce APIs for AI checkout. Internal data platform on Google Cloud, external partner platform on Google Cloud. That's the strategy.

Financial Services · Frankfurt, Germany · BigQuery, GKE, Dataproc, Cloud Composer, Vertex AI

BigQuery

GKE

Dataproc

Cloud Composer

Dataflow

Looker

Vertex AI

Deutsche Bank picked Google as the close partner for its cloud migration, and the clearest example of that bet is OneDP. OneDP is the bank's new strategic data platform for its Private Bank division, the unit that serves Deutsche Bank's wealth management and retail banking customers. The bank built OneDP entirely on Google Cloud, and it's using the platform as the destination for moving a significant share of the Private Bank's on-prem applications onto GCP. The target stack is BigQuery, Dataproc, Cloud Composer, Cloud Run, and Pub/Sub. Those services handle the data warehousing, reporting, and analytics that the German Private Bank runs on.

Sustainability is the other major workload on GCP. Deutsche Bank is building its Sustainability Technology Platform on Google Cloud. That platform powers the bank's ESG risk products, sustainable finance applications, and the CSRD regulatory reports it submits to European supervisors. BigQuery, Dataflow, and Looker handle the data engineering and BI underneath. The global team building it sits inside the bank's Cary, NC technology center.

The commitment shows up at the front door too. db.com resolves to a Google LLC IP and serves traffic through Google's infrastructure, which means the bank's primary public surface runs on Google Cloud as well. The internal data platform runs on Google Cloud. Sustainability reporting runs on Google Cloud. The public web presence runs on Google Cloud. That is the bet Deutsche Bank has made.

Financial Services · Dallas, TX · Vertex AI, BigQuery, GKE, Apigee, Gemini, Cloud Run

Vertex AI

BigQuery

GKE

Apigee

Gemini

Cloud Run

Pub/Sub

Looker

Document AI

Mr. Cooper services mortgages for millions of homeowners, and the company is rebuilding most of that customer-facing experience around AI on Google Cloud. The flagship is Pyro, Mr. Cooper's internal ML SaaS platform that runs on GCP. Pyro is what puts Mr. Cooper's machine learning models in front of actual borrowers, powering the apps that capture customer information during loan applications, the APIs behind mortgage payments, and the loan servicing systems homeowners interact with every month. Mr. Cooper monitors Pyro through Splunk and New Relic and runs a dedicated team whose only job is keeping it healthy.

The broader AI/ML practice runs on the same stack. Mr. Cooper builds its chatbots, voicebots, search systems, and knowledge management tools on Gemini and Vertex AI, using completions, embeddings, vector search, RAG, and fine-tuning to handle the kinds of questions that flood a mortgage servicer's call center. BigQuery handles the analytics underneath, including BQML for in-warehouse ML and Looker Studio for the dashboards on top. GKE, Cloud Run, Pub/Sub, Cloud Functions, and Cloud SQL fill out the rest of the application infrastructure that these AI products run on.

Mr. Cooper's APIs run on Google Cloud too. api.mrcooper.com resolves to a Google IP, and the company has publicly described how it built its document processing platform API-first on Apigee, with Document AI and Vertex AI handling classification and extraction on millions of mortgage documents. Apigee is the layer that exposes those AI capabilities as APIs to internal teams and external partners.

Every loan application, every payment question, every servicing call gets routed through models, apps, and APIs that live on GCP, and Mr. Cooper is hiring to keep expanding what runs there.

Financial Services · London, UK · BigQuery, GKE, Dataflow, Pub/Sub, Dataproc, Cloud Composer

BigQuery

GKE

Dataflow

Pub/Sub

Dataproc

Cloud Composer

Cloud Storage

HSBC is one of the largest banks in the world, and the bank has picked Google Cloud as the foundation for some of its most consequential workloads. The clearest example is iWPB Data Tech, the data platform powering HSBC's International Wealth and Premier Banking division. HSBC built iWPB Data Tech on GCP to be the single place where customer data, account data, and transaction data from the wealth and retail businesses get warehoused, analyzed, and turned into the regulatory reports the bank has to file with supervisors around the world. BigQuery, Dataflow, and Pub/Sub do the ingestion, ETL, and analytics underneath.

The Cash Equities Trading platform is the other big workload. HSBC runs the data engineering behind its equities trading on Google Cloud so that traders can see and act on market activity in real time. Apache Beam, Google Dataflow, and Apache Kafka handle the low-latency, high-throughput stream processing that trading desks demand. Putting an equities trading platform on GCP is a different kind of commitment than putting a reporting workload on it, because trading systems cannot tolerate downtime or latency drift, and HSBC has decided Google Cloud meets that bar.

GCP also runs underneath HSBC's payments modernization. The bank is rebuilding its Global Payments Innovation infrastructure on Google Cloud, with engineering teams integrating third-party platforms, building CI/CD pipelines, and migrating workloads onto the GCP data platform that handles HSBC's cross-border payment flows. Wealth and retail banking, equities trading, and cross-border payments all converge on the same Google Cloud foundation, which is how you can tell HSBC has made GCP its strategic platform rather than just one of several options.

Financial Services · Atlanta, GA · Bigtable, BigQuery, Vertex AI, Apigee, Dataflow, Cloud Composer, GKE

Bigtable

BigQuery

Vertex AI

Apigee

Dataflow

Cloud Composer

GKE

Cloud Storage

Equifax is the most committed Google Cloud customer in credit reporting, and it isn't close. After the 2017 breach, the company kicked off a multi-year program to rebuild itself on GCP, and the result is the Equifax Cloud, the platform that now runs the company's entire credit business. Equifax shut down 57 global data centers and migrated 37,000 customers onto GCP, and the company's CEO has publicly stated that the cloud transformation is essentially complete.

The center of the Equifax Cloud is the Data Fabric, the company's named data platform that unifies more than 100 previously siloed data sources into a single governed structure. Bigtable is the foundation. Equifax built the Data Fabric so that when a consumer walks into a store to finance a phone, the lender can pull a credit file and get a response back in under 100 milliseconds. BigQuery, Dataflow, Cloud Composer, and Cloud Storage handle the ingestion, ETL, batch processing, and analytics that move data from Equifax's suppliers into the products its B2B customers consume through APIs.

The next phase is AI. Equifax is building EFX AI on Google Cloud Vertex AI, combining Vertex AI with its proprietary NeuroDecision Technology and the data sitting in the Data Fabric. The first big product to come out of this is OneScore, a credit score that incorporates traditional credit data, alternative credit data, and signals like cell phone and utility payments.

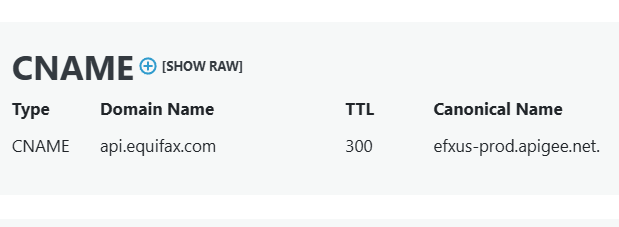

The way the outside world reaches all of this is through Apigee. api.equifax.com is the Apigee-managed gateway that fronts Equifax's developer platform, and every lender, fintech, and partner that pulls a credit file or runs a score from Equifax programmatically goes through it. The Data Fabric, EFX AI, and the API surface that monetizes both all run on the same Google Cloud foundation.

Consumer Goods · Cincinnati, OH · Google Cloud

Google Cloud Platform

BigQuery

Vertex AI

Procter & Gamble is the giant behind Pampers, Tide, Gillette, Oral-B, and Pantene. Their products are in nearly five billion homes around the world.

The most interesting thing about how P&G uses Google Cloud is what they've built on top of it: a homegrown AI platform they call the AI Factory. It's now well-known enough that Harvard Business School wrote a case study about it in 2025.

The point of the AI Factory is to make it dramatically faster for P&G to turn an idea into a working AI tool. Their CIO has said the platform cuts the time to deploy a new model by about six months. Instead of every team rebuilding the same plumbing from scratch, data scientists get instant access to company data, reusable code libraries, and a standard set of tools for testing and shipping models.

The results show up in surprisingly specific places. There's a Pampers app called My Perfect Fit that asks parents a few questions about their baby and recommends a diaper size, getting it right 90 percent of the time. In Brazil, the AI Factory powers a system that bundles customer orders into truck-sized loads based on what stores actually need, which has cut out-of-stock rates by 15 percent. The same platform is also being used to design new fragrances and predict supply chain disruptions.

More than 200 data scientists across nine countries use the AI Factory every day. They feed it enormous amounts of data, with 1.5 terabytes of consumer touchpoints flowing in daily and models trained on the behavior of 500 million people.

Below the AI Factory, Google Cloud quietly runs the consumer-facing machinery too. The platform that handles digital coupons, samples, and loyalty programs across P&G's brand websites lives on Google Cloud. So does the system that powers their paid advertising, which uses Google's BigQuery, a fast database for huge amounts of data, to figure out which audiences to reach on which platforms.

Retail · Boston, MA · Google Cloud

Google Cloud Platform

Kubernetes

BigQuery

Wayfair is the giant online furniture and home goods store. You've probably scrolled through their site looking at sofas or rugs at some point. They sell more than 14 million items, and most of their business comes from people coming back to buy again, which is why the shopping experience matters so much to them.

What's interesting about Wayfair's relationship with Google Cloud is how deeply it's woven into the actual products you experience as a shopper. The website you browse, the prices you see, and the customer service you get when something goes wrong all run on Google Cloud in some way.

Take pricing as the clearest example. Wayfair used to set prices the slow way: a giant overnight job that crunched the whole catalog while everyone was asleep. They've been rebuilding it into a live system that updates prices on the fly, handling more than 10,000 requests a second using Google Cloud and a stack of streaming tools like Kafka. That's the difference between a price that's stale by morning and one that responds to demand in real time.

The storefront itself has gone through a similar shift. The website runs on Google Cloud using Kubernetes, which is a tool that lets them run thousands of small pieces of software side by side and update them without taking the site down. They serve millions of requests a day this way, which means a small team can ship a change to one corner of the site without breaking everything else.

Behind the scenes, Wayfair's data team uses Google's BigQuery to make sense of every customer service call and chat. They're tracking what went wrong, what the agent did about it, and how it was resolved, all so they can spot patterns and fix the underlying issues. The dataset they're working with runs into hundreds of terabytes.

The arc over the last few years tells a clear story. Wayfair started by lifting pieces of their old systems into Google Cloud. More recently, they've been rebuilding things from scratch to take advantage of what the cloud can actually do, like real-time pricing and adopting newer web technologies such as React 19 and Server Components on the storefront. The cloud went from a place to put their stuff to the engine their business runs on.

Retail · Vertou, France · Google Cloud

Google Cloud Platform

BigQuery

Kubernetes

Maisons du Monde is a French furniture and home decor chain. They sell affordable, stylish furniture across Europe through stores, their website, and a marketplace, with around 8,000 employees.

The interesting thing about Maisons du Monde's use of Google Cloud is that the company has built a sizable in-house data team around it, with about 30 to 40 specialists whose entire job is to make sure other parts of the business can actually use their data. The data team isn't a side function. It's a core piece of how the company operates.

The technical setup behind all this is worth a closer look. At the center is BigQuery, Google's tool for crunching huge amounts of data quickly. Around it, the team has built a pipeline using Apache Airflow to schedule and move data automatically (think of it as a conductor making sure each step happens in the right order), Docker and Kubernetes to run everything reliably, and Terraform to manage the whole setup as code rather than by hand. On top of all that sits a tool called Qlik Sense, which is what employees across the business actually open to look at charts and reports.

In practice, this means almost every team at Maisons du Monde, from buyers picking next season's products to the supply chain group deciding what to ship where, gets help from this data group to translate their questions into dashboards and analyses they can use day to day. The team's job is partly technical and partly teaching, since they also run training sessions to help non-technical employees use the tools themselves.

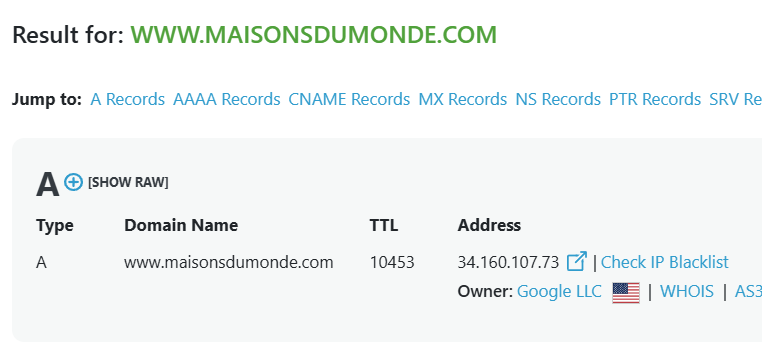

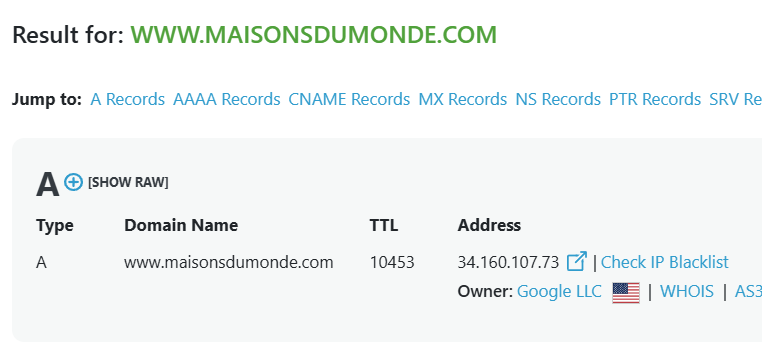

Their relationship with Google Cloud has gotten serious enough that Google has publicly held them up as an example of how to use its cloud tools well. The website itself, which is what most customers experience, also runs on Google Cloud and uses what's called a "headless" setup, which is a way of building a site where the storefront customers see is separated from the systems behind it. That separation makes it easier to redesign the front of the site or plug in new tools without rebuilding everything underneath.

Looking at the arc over the last few years, you can see the data team grow from a starting group of about 20 people to roughly 40, and the technology stack get more sophisticated alongside it. What started as a basic setup with BigQuery and a few dashboards has grown into a full platform with automated data pipelines, machine learning models that the engineering team puts into production, and a formal data governance program that sets standards for how information is defined and tracked across the company.

Retail · Houston, TX · Google Cloud

Google Cloud Platform

Kubernetes

BigQuery

Dataflow

Tailored Brands is the company behind Men's Wearhouse, Jos. A. Bank, K&G Fashion Superstore, and Moores. They're North America's biggest specialty retailer of menswear, known for suits and tailoring, with about 1,000 stores across the US and Canada and over 35 million customers.

The interesting thing about how Tailored Brands uses Google Cloud is that they're in the middle of a major shift: moving away from running everything in their own data centers and into the cloud. They came out of the pandemic with a three-year plan to overhaul their technology, and Google Cloud is the foundation they picked.

In practice, this looks like a slow rebuild of the company's plumbing. Old applications that used to live on physical servers in a Houston office are being moved to Google Cloud, sometimes lifted as is and sometimes redesigned from scratch. The team running this work uses Google Cloud's Kubernetes service, which is a tool that lets them run lots of small pieces of software side by side, along with databases like BigQuery for crunching large amounts of data and Spanner for handling things like transactions across stores.

The other big piece is how data flows between systems. A retailer like Tailored Brands has dozens of separate programs that all need to talk to each other, including the website, the point-of-sale registers in stores, inventory systems, pricing systems, and the catalog of every product. They've leaned heavily on Google Cloud Dataflow, which is a tool for moving and transforming data in real time, to keep all those pieces in sync. Without it, an item that goes out of stock in a store might still show up as available online for hours.

Looking at the arc over the last few years, you can see the strategy maturing. Earlier on, the focus was on basic cloud setup work and juggling two providers at once, with both Amazon's cloud and Google's in play. More recently, Google Cloud has become the main platform, with Amazon's cloud now used mostly for specialized data work and Microsoft's Azure for back-office tools. The company has gone from experimenting with cloud to betting its three-year transformation plan on it.

Telecommunications · Paris, France · Google Cloud

Google Cloud Platform

BigQuery

Kubernetes

Orange is one of the largest telecom companies in the world. They run mobile and internet service for around 290 million customers across 26 countries, and they also have a separate arm called Orange Business that sells IT and networking services to large companies and governments.

The interesting thing about how Orange uses Google Cloud is that they're using it in three very different ways at the same time. Internally, it's the main cloud they use to modernize their own systems. Inside their cybersecurity arm, it's where they build new security tools. And inside their consulting arm, it's the platform they use to build solutions for their own customers. The same cloud shows up over and over, but for completely different reasons depending on which part of the company you look at.

The biggest internal use is around data. Orange has been pulling data from across all 26 of its country operations into a unified platform built on Google Cloud, with BigQuery at the heart of it. Around BigQuery, they've layered tools like Apache Spark, Kafka, and Apache NiFi for moving and processing data, and Grafana for keeping an eye on how everything is performing. The team running this work is largely based in Romania and works on managing about 11 million customer devices like Wi-Fi routers and TV boxes across 12 of Orange's country operations, processing the constant stream of data those devices send back.

Orange Cyberdefense, the company's cybersecurity unit, runs a tool called Qevlar AI on Google Cloud. It's an AI-powered system that automatically investigates security incidents, the kind of work that used to take human analysts hours of digging through logs. The team uses Kubernetes to run it, along with security automation tools like XSOAR. They also handle pre-incident work like helping clients improve their logging and run tabletop crisis exercises, all backed by the cloud setup.

The third piece is Orange Business, the consulting arm, which builds Google Cloud platforms for outside clients. They run the data platform for Carrefour, the giant French supermarket chain, processing terabytes of data every day to feed dashboards and APIs that the business uses to make decisions. They've built a public-sector data platform for the Belgian government using Google Cloud and Kubernetes. They also work with the cosmetics industry on data quality and governance, and with energy company Sappi on forecasting models that help cut energy costs.

Looking at the arc, you can see the company moving from being a Google Cloud user to being a Google Cloud builder. Earlier on, the focus was on basic data engineering and migrating older systems into the cloud. More recently, the work has shifted toward AI, with teams deploying machine learning models in production and experimenting with agent-based AI for things like security investigations and customer service.

Telecommunications · Carlsbad, CA · Google Cloud

Google Cloud Platform

BigQuery

Apache Airflow

Viasat is a satellite internet company that's been around for over 35 years. They provide internet to homes in rural areas, Wi-Fi on commercial flights, internet for ships at sea, and communications systems for the U.S. military. They're headquartered in Carlsbad, California and have around 6,700 employees.

The interesting thing about how Viasat uses Google Cloud is that it sits at the heart of how they actually run and measure their satellite service. Most of what makes a satellite internet business work, things like how much capacity each beam has, how fast passengers on a plane can stream, how much each customer should be billed, depends on processing huge amounts of data quickly. Viasat has built that data engine on Google Cloud, with BigQuery as the central warehouse.

One of the more concrete examples is in their commercial aviation business, where they sell Wi-Fi to airlines like Delta and JetBlue. There's a team at Viasat called Revenue Assurance that owns the systems measuring whether they're hitting the service agreements they've promised to airlines, things like minimum speed and uptime. They build data pipelines using Apache Airflow, Java, Python, and BigQuery to turn millions of in-flight connection events into the metrics that eventually show up on customer invoices. If a passenger streams a movie at 35,000 feet, the data confirming that flow ends up in Google Cloud.

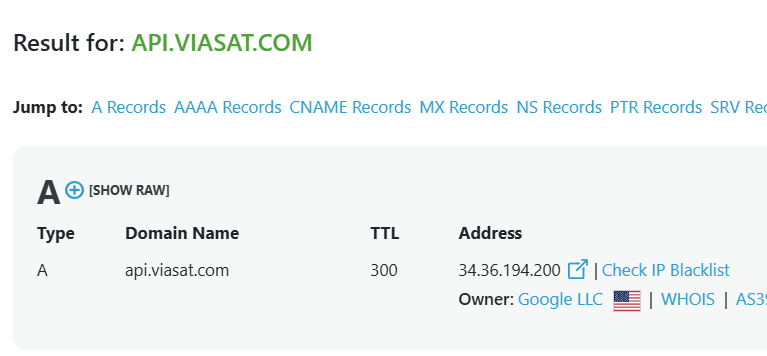

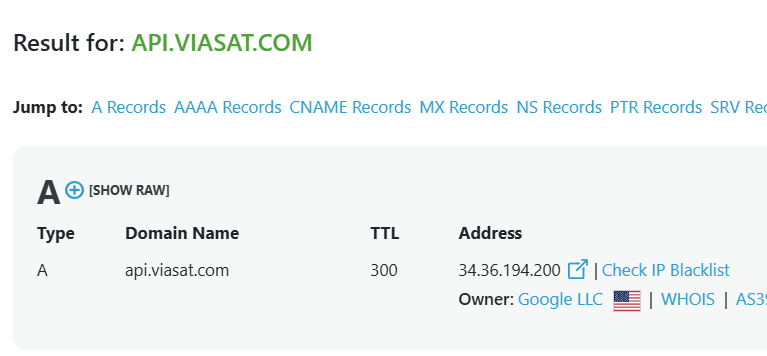

Viasat also runs their main developer API portal, developer.viasat.com, with Google Cloud as part of the stack. This is the system that lets airlines, business customers, and partners programmatically connect to Viasat services for things like managing accounts, checking network status, and integrating connectivity into their own apps. So when an airline's IT team needs to query whether a flight is online or pull usage data, those requests are flowing through infrastructure where Google Cloud is involved.

On the network side, there's a Capacity Engineering team that uses Google Cloud to figure out how much bandwidth to allocate where. They've been migrating their performance assessment tools onto Google Cloud and are now experimenting with putting AI agents on top, using models like Gemini and Claude to automate parts of capacity planning that used to be manual. The idea is that instead of an engineer staring at telemetry charts to decide how to balance load across satellites, an AI agent reads the same data and recommends actions.

Looking at the arc, you can see Viasat's use of Google Cloud expanding over time. Earlier on, the work was mostly about basic data engineering and migrating existing tools onto Google Cloud. More recently, they're going deeper into AI and machine learning, with teams building generative AI applications, deploying ML models in production, and even prototyping agent-based systems for things like network operations and capacity planning. For a satellite company, betting on the cloud as the brain behind the network is a meaningful shift.

Telecommunications · Bonn, Germany · Google Cloud

Google Cloud Platform

Sovereign Cloud

Kubernetes

Deutsche Telekom is one of Europe's biggest telecom companies. They run mobile and internet service for tens of millions of people across Germany and beyond, and they also own T-Mobile in the US. Through a business arm called T-Systems, they sell IT services to other large companies.

The interesting thing about Deutsche Telekom and Google Cloud is that they aren't just a regular customer. The two have a deep partnership where Telekom resells Google's cloud to other German companies, often with extra layers wrapped around it for European data privacy and security rules.

The headline product from this partnership is something called the Sovereign Cloud. The basic idea is that a lot of European companies want the modern features of Google Cloud but worry about their data sitting on American infrastructure under American legal rules. Telekom's answer is to operate Google Cloud inside Germany under German governance, so a hospital, an automaker, or a government agency can use it without crossing those legal lines. Telekom builds the layer that handles security, compliance, and operations, while Google provides the underlying technology.

Internally, Telekom uses Google Cloud for itself too. They've built what's called a "landing zone," which is a standardized, pre-configured corner of Google Cloud that any team inside the company can plug into when they want to launch a new project. This saves their developers from having to set up security, networking, and access controls every time. The team that runs this is small and tight-knit, working out of Leipzig, Bonn, and Budapest, and it uses the same modern tools (Kubernetes, Terraform, the Go programming language) that any cutting-edge tech company would.

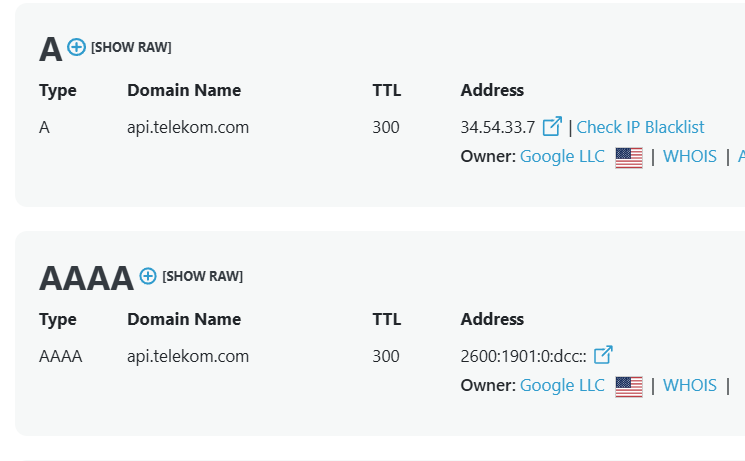

There's also a more unusual piece of the puzzle: Telekom has opened up parts of its actual phone network to outside developers, and that whole platform runs on Google Cloud too. Through a product called MagentaBusiness API, an app can ask the network for things like guaranteed low-latency for a few minutes (useful for things like remotely operated vehicles or live broadcasts), or check whether a phone is currently roaming abroad before sending a message. It's the network itself, repackaged as something a programmer can call from their code. Google Cloud is the backbone that makes all of that available to developers around the world.

The arc here is interesting. A few years ago, Telekom's cloud work was mostly about internal modernization and basic Google Cloud setup. More recently, the focus has shifted toward selling Google Cloud as a service to others, with Telekom acting as the trusted European middleman that handles the regulatory and security headaches. They're not just using the cloud anymore. They're selling a more controlled version of it.

Telecommunications · Charlotte, NC · Google Cloud

Google Cloud Platform

BigQuery

Dataflow

Looker

Brightspeed is a relatively new internet provider that serves about 6 million homes and businesses across 20 states, mostly in the Midwest and South. They were created in 2022 when the private equity firm Apollo bought the local phone and DSL operations that used to belong to CenturyLink, and now they're spending around two billion dollars to upgrade those old copper lines to fiber.

The interesting thing about how Brightspeed uses Google Cloud is that they're building their entire data and analytics foundation on it from scratch, which is a rare luxury for a company in this industry. Most telecoms are stuck dragging along decades of old systems. Brightspeed got to start fresh, and they picked Google Cloud as the place to do it.

The center of their setup is BigQuery, Google's tool for storing and querying massive amounts of data. Around it, they've built a modern stack of pipelines that pull information out of customer billing systems, network equipment, the call center, and field operations. They use Google Cloud Dataflow for processing data in real time, Pub/Sub for moving messages between systems, and Looker for the dashboards that managers and analysts actually look at every day. They also use Dataplex, Google's data governance tool, to make sure the numbers in those dashboards are trustworthy.

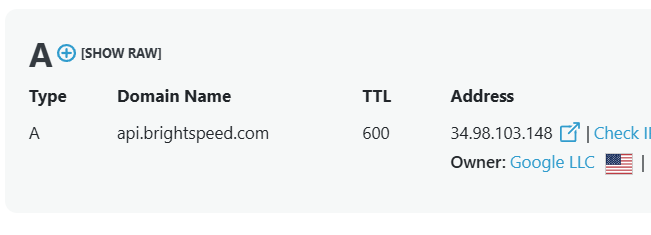

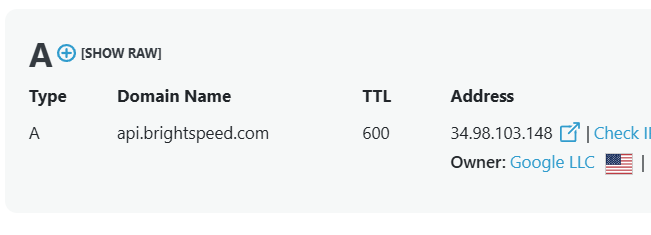

Brightspeed's customer-facing API also runs on Google Cloud. This is the system that powers their mobile app and the website where customers log in to pay bills, schedule installations, run speed tests, and manage their accounts. When millions of customers across 20 states tap their phone to check on a service appointment or update a payment method, those requests hit Brightspeed's Google Cloud setup.

Looking at the arc, you can see the team and ambitions growing. Earlier on, the focus was on basic data engineering and getting BigQuery set up. More recently, they've been hiring for AI and machine learning roles, building predictive models to forecast things like customer churn, network problems, and where to invest next in fiber buildout. For a company that's still essentially in startup mode despite serving millions of customers, betting on Google Cloud as the foundation has let them move much faster than a traditional telecom could.

Wellen Construction

Wellen Construction

Borough of Point Pleasant

Borough of Point Pleasant

Profitflex

Profitflex

Evergreen Park Public Library

Evergreen Park Public Library

Westfall Commercial Furniture

Westfall Commercial Furniture

Willis & Jurasek

Willis & Jurasek

Roosevelt Institute

Roosevelt Institute

StepEx

StepEx

Carry The Load

Carry The Load

FRANKLIN COUNTY LIBRARY SYSTEM

FRANKLIN COUNTY LIBRARY SYSTEM

MY WARDROBE HQ

MY WARDROBE HQ

Limmud

Limmud

beruby.com

beruby.com

Paul|McCoy Family Office Services

Paul|McCoy Family Office Services

Leif J Ostberg Inc (LJO)

Leif J Ostberg Inc (LJO)

Blue Tree Resort

Blue Tree Resort

Salt and Sage Books

Salt and Sage Books

Nature Sacred

Nature Sacred

Pinnacle Search

Pinnacle Search

Spotify

Spotify Yahoo

Yahoo NCR Voyix

NCR Voyix Zebra Technologies

Zebra Technologies Pegasystems

Pegasystems Mayo Clinic

Mayo Clinic Cardinal Health

Cardinal Health DaVita

DaVita Ascension

Ascension CVS Health

CVS Health American Express

American Express Deutsche Bank

Deutsche Bank Mr. Cooper

Mr. Cooper HSBC

HSBC Equifax

Equifax Procter & Gamble

Procter & Gamble Wayfair

Wayfair Maisons du Monde

Maisons du Monde Tailored Brands

Tailored Brands Orange

Orange Viasat

Viasat Deutsche Telekom

Deutsche Telekom Brightspeed

Brightspeed